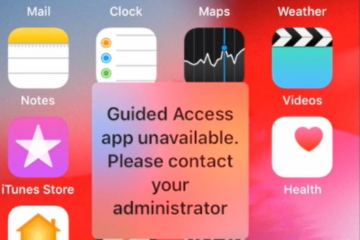

Don’t panic, there is always a solution.

During and after training and studies, I worked as a software architect, trainer für apprentices and team leader for consulting companies. My main area of work has been cloud and software architectures but in recent years I have specialized in continuous architecture/integration/delivery/deployment. The distribution of on-premises and SaaS software, especially into marketplaces like Azure Marketplace and AWS Marketplace is something I am very interested in.

In order not to completely lose touch with technical issues through the high-level work, I assist in troubleshooting tricky software-related problems.

Since 2004 I have been using this private blog to document solutions for which I have not found a direct answer on Google. During these years, topics from all possible areas have accumulated. Many of them are not directly related to my actual work. Since working in our own company in 2022, I am using our company’s knowledge base for work-related documentation.

If you need professional assistance with your network/infrastructure/cloud/automation problem, feel free to email me. Even though this is outside my specialization, I am always up for a challenge.